|

I am currently a Quantitative Researcher at Squarepoint. Previously, I finished my Ph.D. at the Department of Computer Science at Columbia University. I am fortunate to be advised by Prof. Baishakhi Ray. I did my undergrads at Reed College and Columbia University. |

|

|

My PhD research work mainly focuses on testing/improving robustness of learning based systems like Autonomous Driving Systems (ADSs) and Image Classifiers. Representative papers are highlighted. |

|

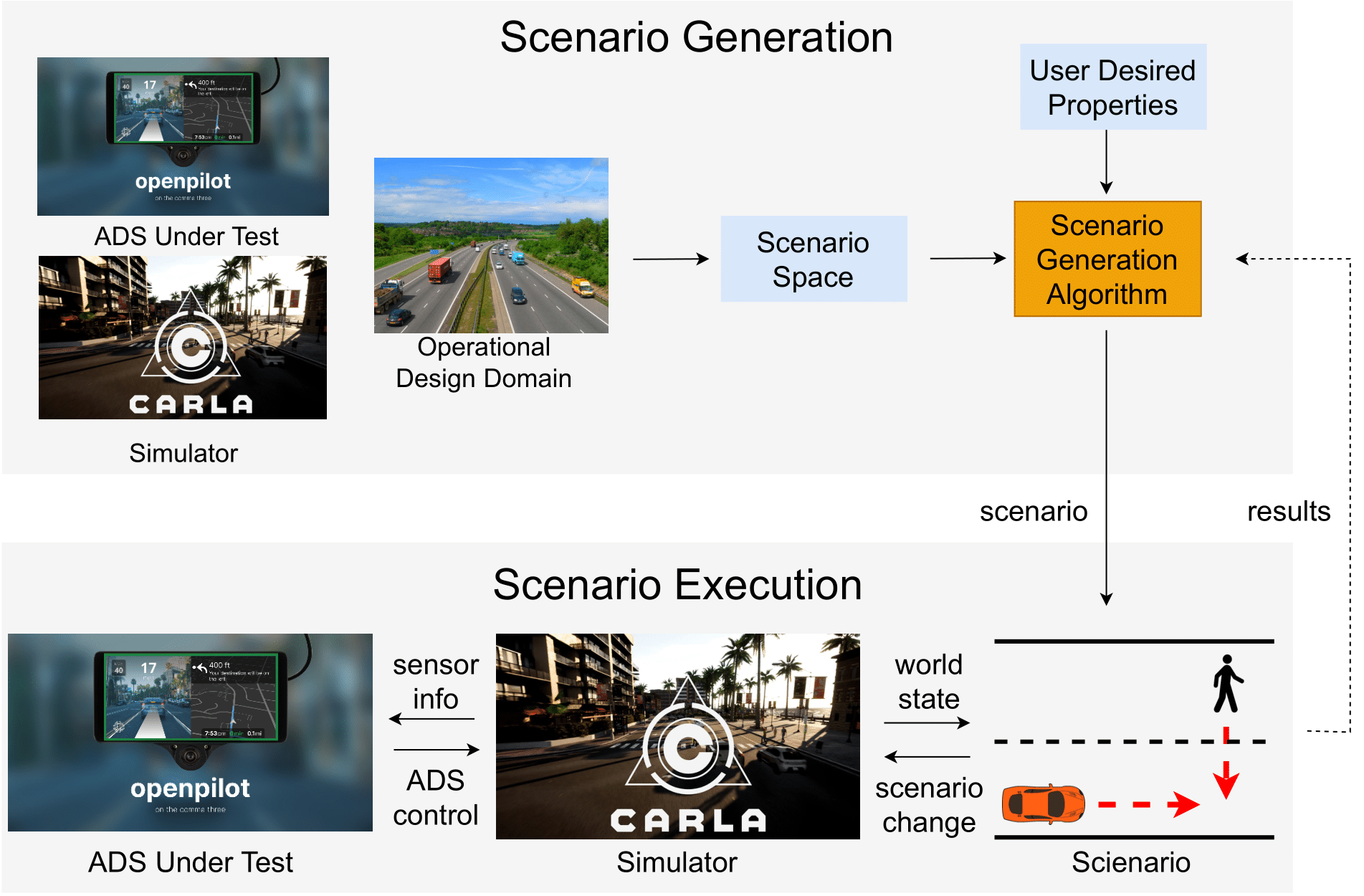

Ziyuan Zhong PhD Dissertation, 2024 paper In this dissertation, we first identified the neceesity of efficient simulation testing for Autonomous Driving Systems (ADSs) lies in crafting optimal testing scenarios. Next, we proposed four methods (AutoFuzz, FusED, CTG, and CTG++) to efficiently construct these testing scenarios. |

|

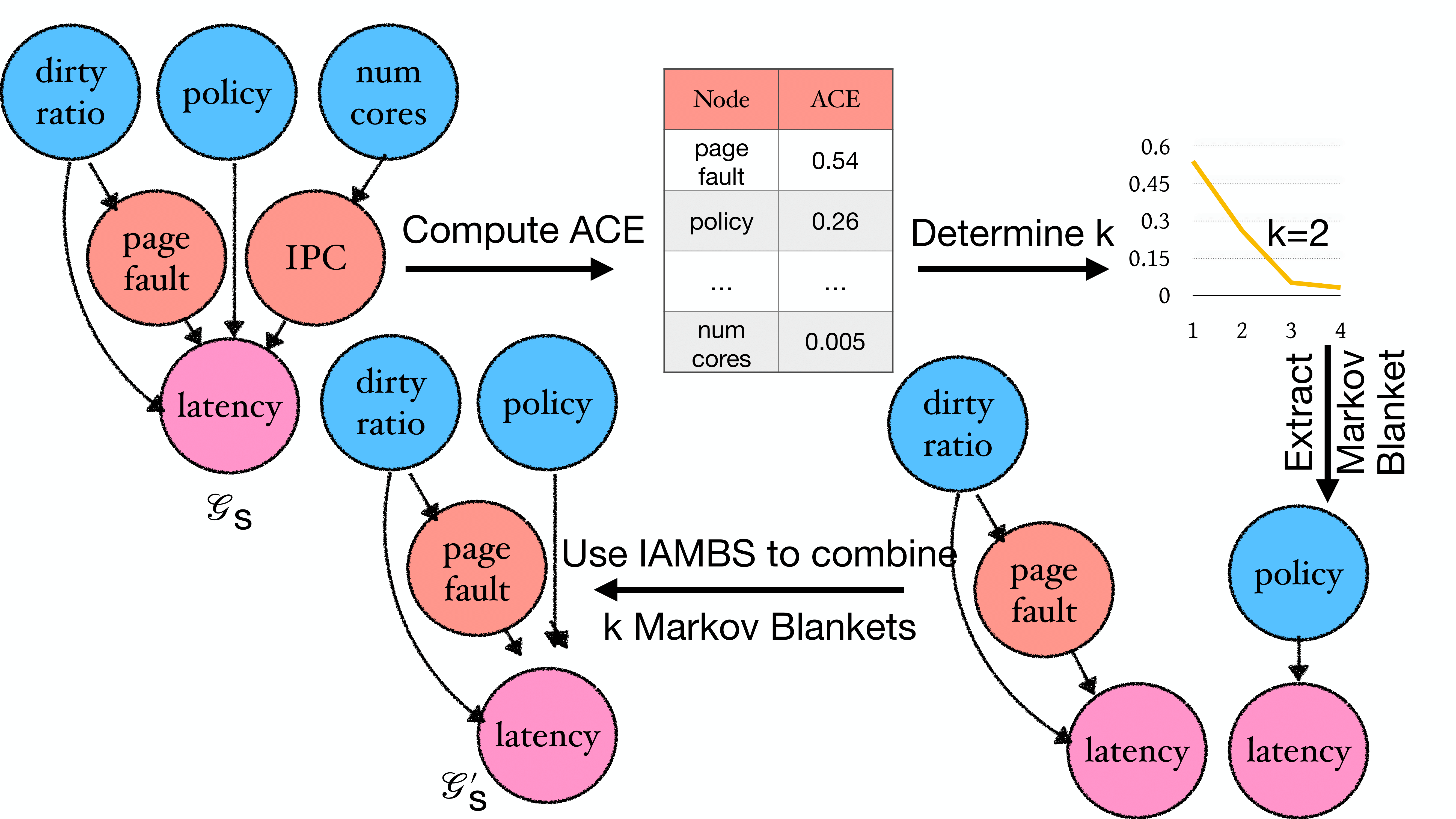

Md Shahriar Iqbal, Ziyuan Zhong, Iftakhar Ahmad, Baishakhi Ray, Pooyan Jamshidi SoCC, 2023 paper / bibtex We propose a method that sidesteps the limitations of existing work on system performance optimization under environmental changes by identifying invariant causal predictors under environmental changes, enabling the optimization process to operate on a reduced search space, leading to faster system performance optimization. |

|

Ziyuan Zhong, Davis Rempe, Yuxiao Chen, Boris Ivanovic, Yulong Cao, Danfei Xu, Marco Pavone, Baishakhi Ray CoRL (Oral Presentation), 2023 paper / code / bibtex We leveraged Large Language Models (LLMs) and developed a language guided scene-level conditional diffusion model for language-controllable, realistic traffic generation. |

|

Ziyuan Zhong, Davis Rempe, Danfei Xu, Yuxiao Chen, Sushant Veer, Tong Che, Baishakhi Ray, Marco Pavone ICRA, 2023 paper / code / bibtex / demo We developed a conditional diffusion model (trained on large-scale traffic flow datasets) that can generate controllable, feasible, and realistic traffic trajectories. |

|

Yun Tang, Yuan Zhou, Kairui Yang, Ziyuan Zhong, Baishakhi Ray, Yang Liu, Ping Zhang, Junbo Chen arXiv, 2022 paper / bibtex We propose a method that can automatically generate a more concise map from a given complex map to reduce redundant test cases. |

|

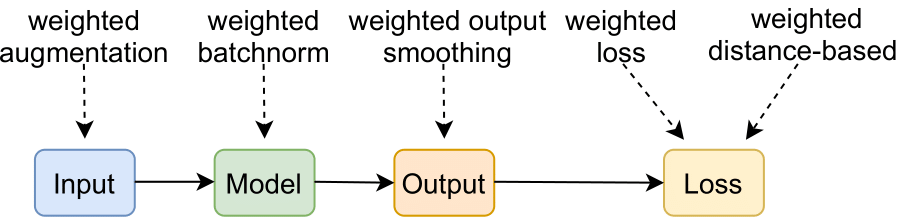

Ziyuan Zhong*, Yuchi Tian*, Conor J Sweeney, Vicente Ordonez, Baishakhi Ray arXiv, 2022 paper / code / bibtex A series of methods based on weighted regularization for repairing group-level errors of DNNs. |

|

Ziyuan Zhong, Zhisheng Hu, Shengjian Guo, Xinyang Zhang, Zhenyu Zhong, Baishakhi Ray ISSTA, 2022 paper / code / bibtex FusED can efficiently identify fusion errors. |

|

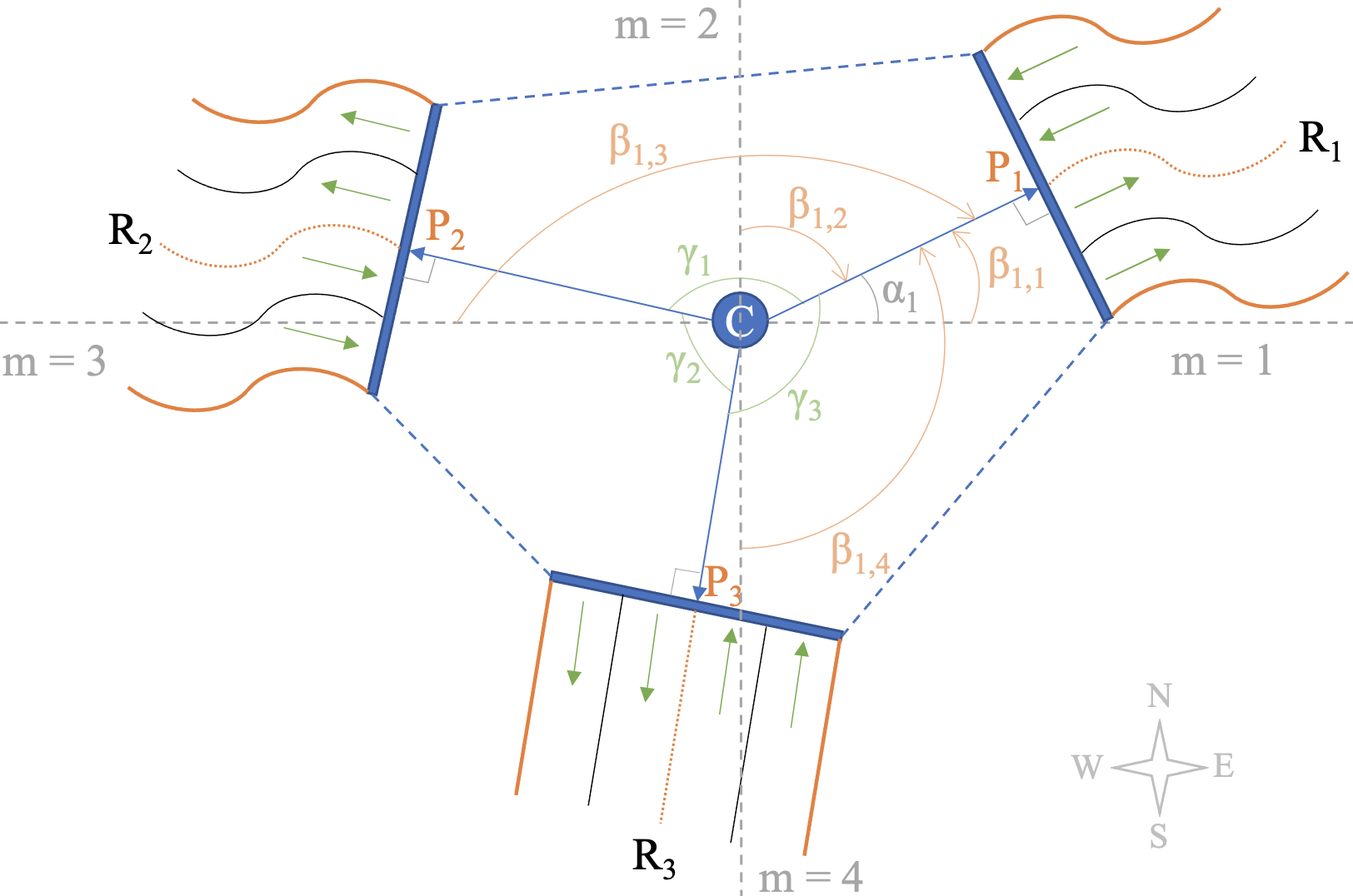

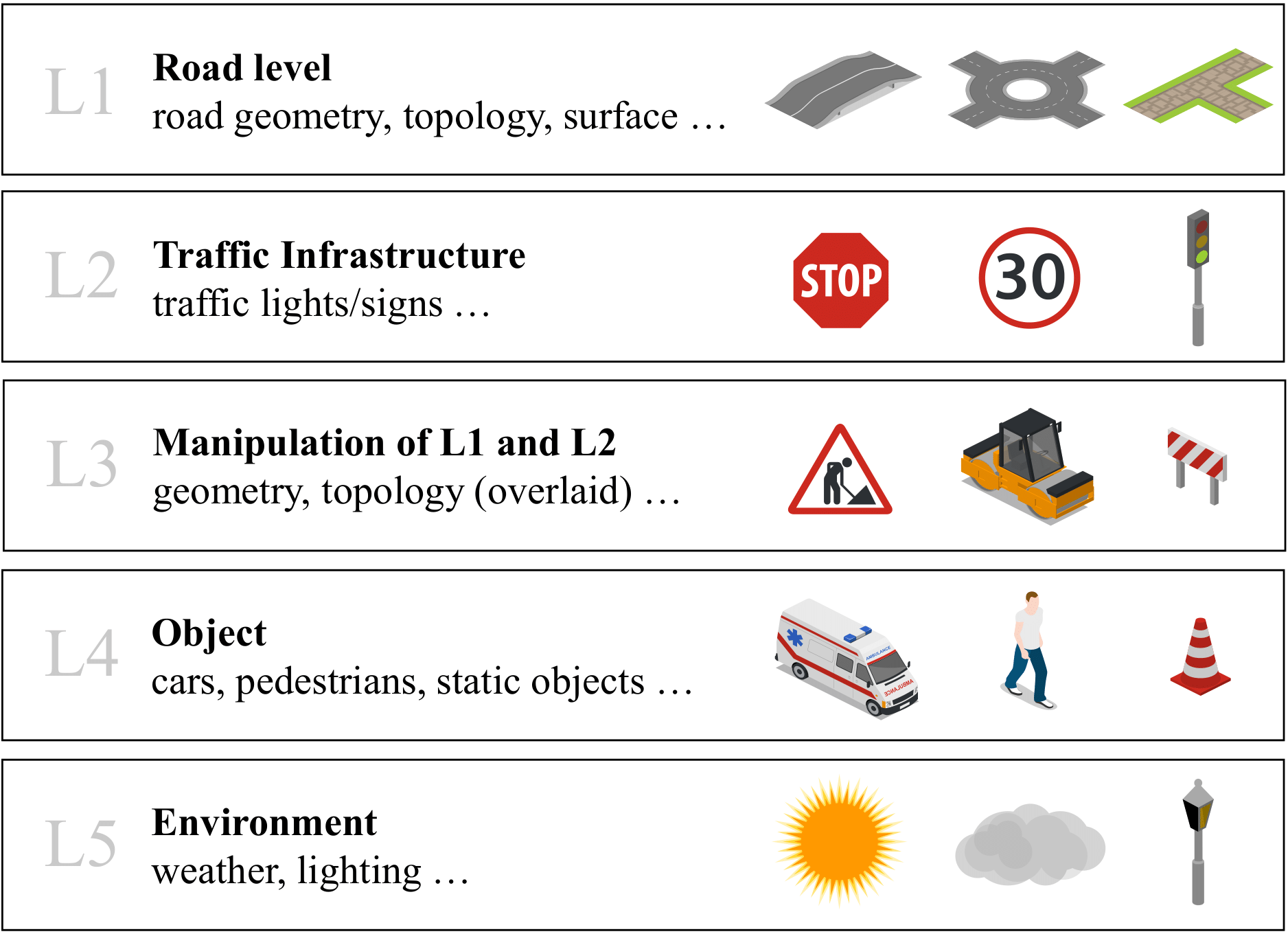

Ziyuan Zhong, Yun Tang, Yuan Zhou, Vania de Oliveira Neves, Yang Liu, Baishakhi Ray arXiv, 2021 paper / bibtex A Survey on Scenario-Based Testing for Automated Driving Systems in High-Fidelity Simulation. |

|

Ziyuan Zhong, Gail Kaiser, Baishakhi Ray Transactions on Software Engineering (TSE), 2022 paper / code / bibtex AutoFuzz uses a grammar-based, learning-guided fuzzing technique to efficiently find violations of Autonomous Driving Systems. |

|

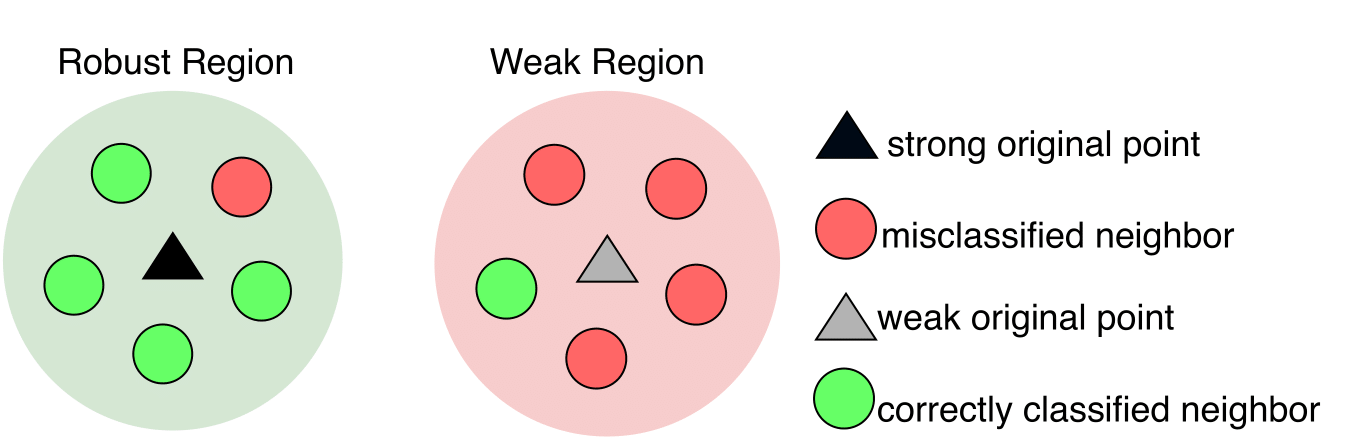

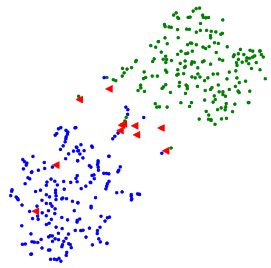

Ziyuan Zhong, Yuchi Tian, Baishakhi Ray FASE, 2021 paper / code / bibtex DeepRobust can identify the input images whose small variations may lead to erroneous DNN behaviors. |

|

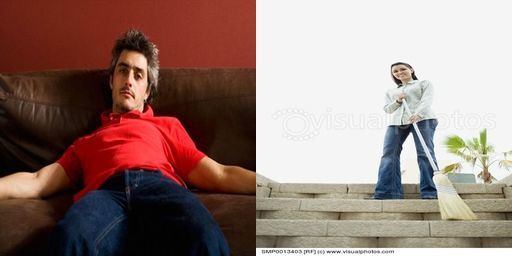

Yuchi Tian*, Ziyuan Zhong*, Vicente Ordonez, Gail Kaiser, Baishakhi Ray ICSE, 2020 paper / code / bibtex We developed a testing technique, DeepInspect, to automatically detect class-based confusion and bias errors in DNN-driven image classification software. |

|

Chengzhi Mao, Ziyuan Zhong, Junfeng Yang, Carl Vondrick, Baishakhi Ray Neurips, 2019 paper / code / bibtex We propose to regularize the representation space under adversarial attack with metric learning to produce more robust classifiers. |

|

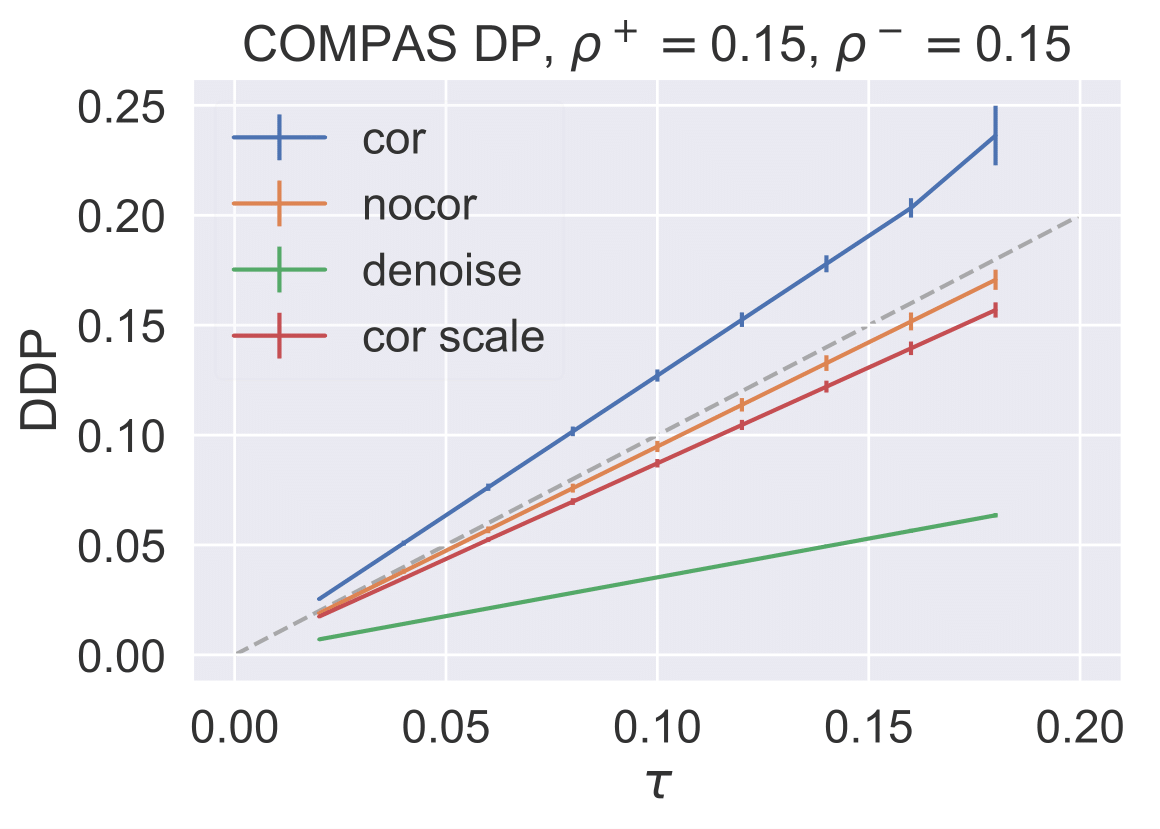

Alexandre Lamy*, Ziyuan Zhong*, Aditya Krishna Menon, Nakul Verma Neurips, 2019 paper / code / bibtex We showed both theoretically and empirically that even under the very general MC learning noise model on the sensitive feature, fairness can still be preserved by scaling the input unfairness tolerance parameter. |

|

Rohan Chandra, Ziyuan Zhong, Justin Hontz, Val McCulloch, Christoph Studer, Tom Goldstein Asilomar Conference on Signals, Systems, and Computers, 2017 paper / code / bibtex PhasePack is a collection of sub-routines for solving classical phase retrieval problems. PhasePack contains implementations of both classical and contemporary phase retrieval routines. |

|

|

|

Reviewer: Neurips2020-2024, ICLR2021-2025, ICML2021-2024, ICRA2023-2024, TOSEM Lead Teaching Assistant: COMS 4115 Programming Language & Translators(23 Fall) Teaching Assistant: COMS 4115 Programming Language & Translators(23 Spring), COMS 4771 Machine Learning(18 Summer, 19 Spring), ELEN 4903 Machine Learning(Edx)(18 Spring) |